Workflows

Builds show what I make. Workflows show how you can make it too. Each one is a real recipe I ran on real work, with the exact prompts, commands, and artifacts. Copy what helps.

- 10 minutes$0 (recipe runs on a bundled sample inbox)Beginner

Inbox → Action Queue (without touching real mail)

A take-home recipe that turns a bundled 50-thread sample inbox into a ranked action queue. Read-only pattern, human review required, zero credentials needed.

Bundled sample inboxLocal extraction scriptMarkdown queue file - 15 minutes$0 (recipe uses pre-rendered placeholder cards)Beginner

Prompt → Visual Asset QA before anything ships

A take-home recipe that runs a 4-variant QA grid on a bundled sample prompt before any image lands on a public surface. Brand-safety, on-prompt fidelity, embedded-text check, alt-text candidate.

Bundled sample prompt4 placeholder variantsQA grid template - 60 seconds for the auto report, 2 to 3 minutes if you also run the manual channel checks$0Beginner

Check whether your site is discoverable to ChatGPT, Claude, Perplexity, and Google

Paste your URL. The demo runs real checks against your homepage, robots.txt, sitemap.xml, and llms.txt, then hands you copy-ready prompts to verify whether each LLM channel can actually answer about your site.

Next.js route handlerfetchrobots.txt parser+1 - 45 to 90 minutes$0 to $25Intermediate

Research a physical product supply chain from one SKU

A repeatable SWT workflow for sellers, resellers, importers, and operators who need a defensible first-pass supply-chain map from a product label, SKU, or package photo.

Package photosRetailer pagesOfficial brand pages+3 - 45 to 75 minutes$5 to $20Intermediate

Demo GPT-5.5 with an inbound lead to proposal pack

A visible GPT-5.4 vs GPT-5.5 workflow demo for founders and operators: give both models the same messy inbound lead packet, then compare how much of the proposal, CRM update, follow-up email, and risk review each lane completes.

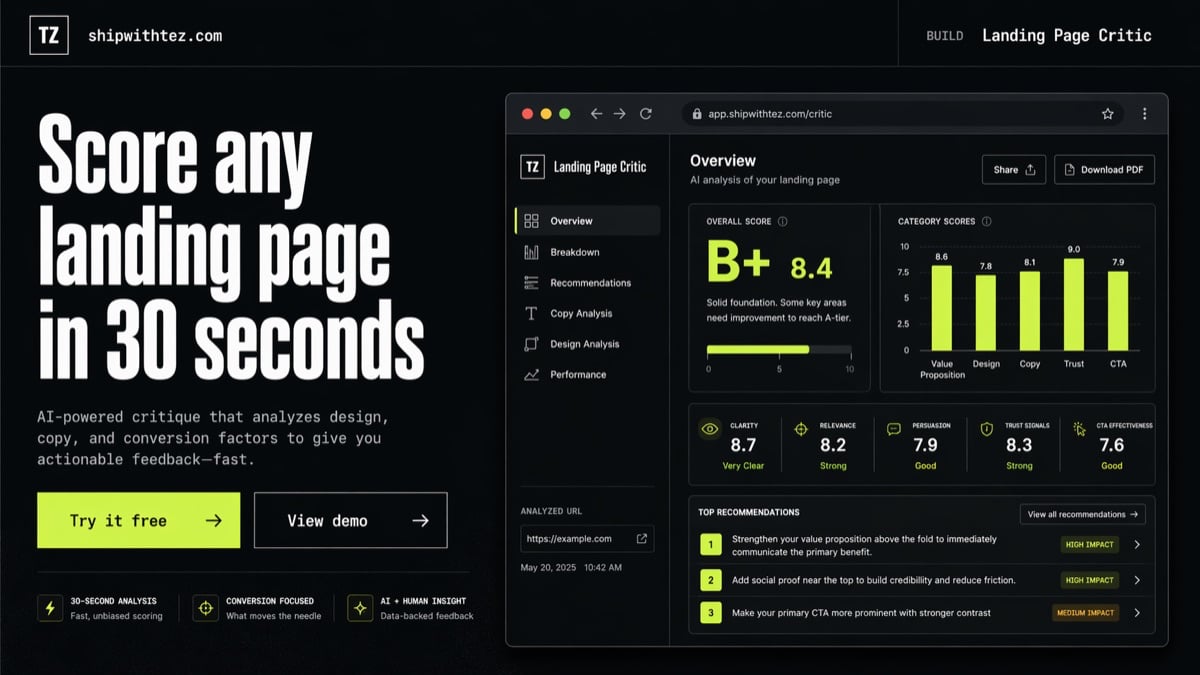

GPT-5.4GPT-5.5CRM notes+3 - shipped on this site60 words → this herogpt image 2 · 15 min15 to 20 minutes$0 via ChatGPT PlusBeginner

Prompt-to-production landing page hero in under 20 minutes

The thumbnail on the Landing Page Critic build card is a GPT Image 2 output from my launch-day comparison, dropped into production in 15 minutes. Here's the 60-word prompt that got it, the export recipe, and why this replaces the 'hire a designer' step for a single-founder project.

GPT Image 2ChatGPT Plus (Thinking mode)sips (macOS)+2 - 30 seconds per QA pass$0 (uses your Claude subscription)Beginner

QA your own feature with Chrome MCP before you ship it

Stop Cmd+tabbing through your dev server. Hand the localhost URL to Claude, describe what should work, and get screenshots of every state, a ranked issue list, and a pass or fail in 30 seconds. Catches the regression the rushed manual click misses.

Claude CodeChrome DevTools MCPlocalhost - live artifact15 minutes$0Intermediate

Extract a Claude Design artifact into a live app component

Claude Design ships beautiful artifacts, but only as preview URLs. This workflow pulls the real HTML into your own app so you can embed it, theme it, and ship it, no Tailwind imitation, no screenshots pretending to be proof.

Claude CodeChrome DevTools MCPNext.js+1 - 20 minutes~$0.10 – $0.50Beginner

A/B test AI models on the same task before you commit

Same prompt, same inputs, two models. Surface the real differences on a task that matters to you, in 20 minutes, instead of picking based on someone else's benchmark.

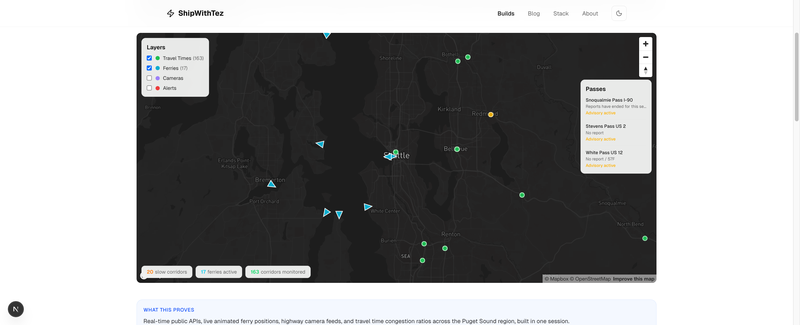

Claude CodeCodex CLIAny two LLMs+1 - 1 of 4 shippedreal dataset3 – 5 hours$0Intermediate

Turn a public dataset into a live explorer in one evening

Raw CSV or JSON from any open data source becomes a filterable, shareable explorer on your own domain. No backend, no API keys, no runtime cost. Proof lives on-site. I've run this four times.

Next.jsCSV/JSON parsingA chart library+1 - 2 hours initial setup + 15 min/day$0Intermediate

Karpathy's 4 principles for AI memory, implemented end-to-end

Karpathy's April 2026 post named the four constraints any serious personal AI memory system needs: Explicit, Yours, File over app, BYOAI. Here's each one translated into concrete implementation, with the pieces you actually install on day one.

Obsidian (or any markdown editor)gitClaude Code / Codex / Gemini CLI+1 - 30 min/week$0Beginner

Promote daily notes into agent knowledge that compounds

Capturing everything is easy. The compounding is in what you promote. A weekly rhythm that turns raw daily captures into curated, LLM-readable learnings your agents treat as ground truth.

Your markdown vaultAny AI agent with full vault accessCalendar (for the weekly slot) - 10 minute read$0Beginner

The LLM-native memory pattern: a short field report

A short, dated history of how the 'personal markdown wiki for AI agents' pattern emerged, who shipped what, and why it all converged on the same four properties. Reading this first makes every other AI-memory recipe make more sense.

A browserMaybe a highlighter - Three focused evenings$0 – $220/mo depending on how many agent subscriptions you runAdvanced

Run a personal LLM-native operating system (the Life OS stack)

The markdown wiki is the foundation. This is the system on top of it: multi-agent orchestration, atomic session protocols, cron automation, mobile bridges, and live data feeds. What you end up with is not a notebook, it is an operating system for your own attention and work.

Your existing markdown vaultClaude CodeA second agent (Codex, Gemini CLI, OpenCode)+3